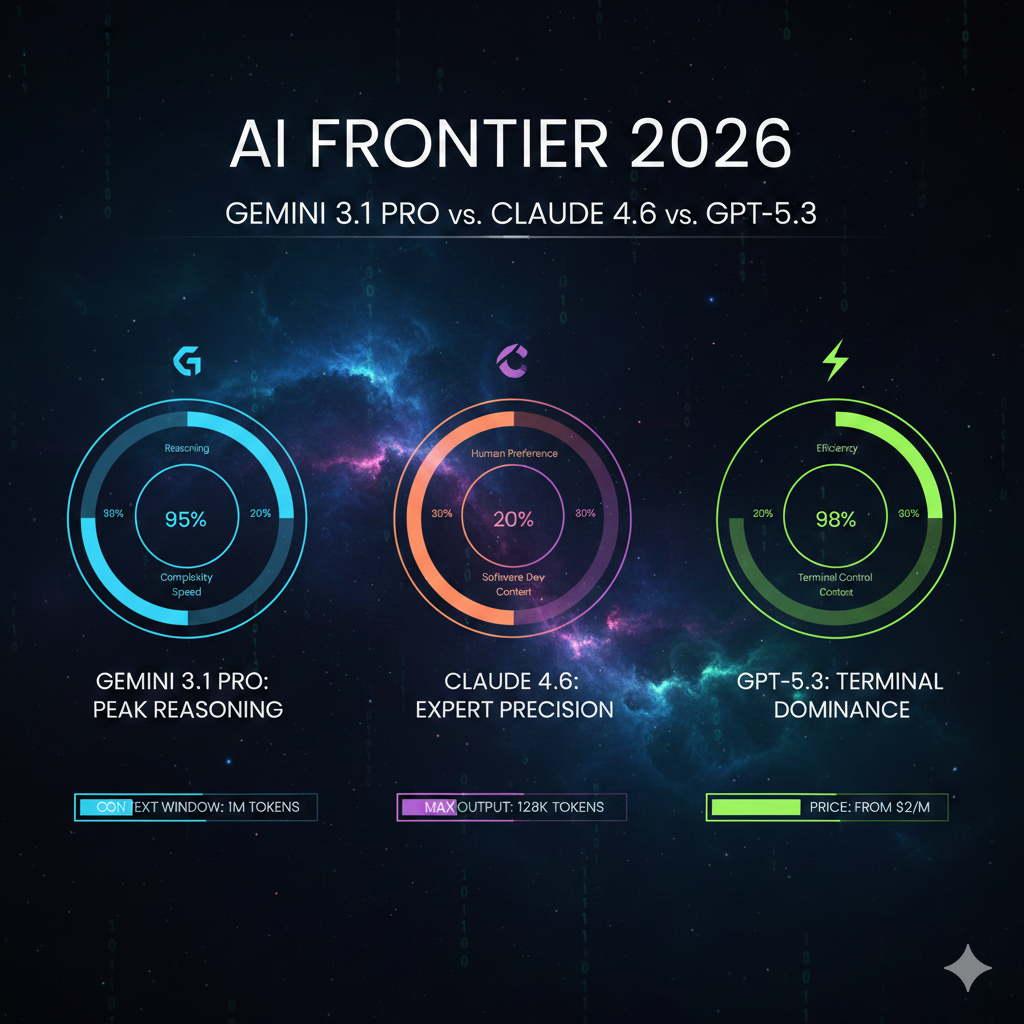

Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3: Navigating the February 2026 Agentic Super-Cycle

The landscape of artificial intelligence shifted fundamentally in February 2026, a period I now call the “Agentic Super-Cycle,” and if you are trying to decide on the winner of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3, you have to understand that the old benchmarks are dead. We are no longer in the era of Generative AI, where we marveled at a model’s ability to predict the next token or write a semi-coherent poem; we have entered the era of Reasoning and Agentic AI, defined by systems that can plan, self-correct, and navigate entire operating systems. When I look at the current market, it is clear that the competition has moved beyond raw “smartness.” Today, my choice of model depends entirely on which specific workflow I am trying to automate—whether it is deep repository-level coding, multi-modal research synthesis, or complex OS automation. The release of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 represents three distinct bets on what the future of work looks like, and after digging into the logs of these models for weeks, I’ve realized that the “best” model is now a matter of architectural fit rather than a simple leaderboard ranking.

I remember sitting in my workshop when Google DeepMind dropped the Gemini 3.1 Pro update on February 19, 2026. At first glance, it seemed like a modest point-version bump, but the reasoning capability was a total shock to the system, especially on the ARC-AGI-2 benchmark where it more than doubled the performance of its predecessor. What makes this cycle so intense is the simultaneous pressure from Anthropic and OpenAI. Claude 4.6 arrived with its “Agent Teams” architecture, essentially promising to replace a mid-level project manager with a swarm of coordinated AI sub-agents. Meanwhile, the GPT-5.3 Codex launch redefined the “Digital Coworker,” moving away from the chat window and into a persistent OS cockpit that can run for days on a single task. When we compare Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3, we are really comparing three different philosophies of autonomy: Google’s tiered compute efficiency, Anthropic’s hierarchical coordination, and OpenAI’s integrated OS agency.

The concept of “Reasoning Density” has replaced simple parameter counts as the only metric that matters for production-grade workflows in 2026. I define reasoning density as a model’s ability to maintain logical consistency across massive prompts and complex constraints without requiring five rounds of human-assisted refactoring. In my testing, the efficiency of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 varies wildly depending on how much “thinking” the model has to do before it outputs a single character. We are now seeing models that utilize “System 2” inference-time compute, where the model essentially uses a “scratchpad” to verify its logic internally. This means the economic conversation has shifted from “cost per token” to “cost per successful task execution,” and that is where the real comparison of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 begins to get interesting for those of us trying to scale our output without losing our soul.

- The Architectural Breakdown: Tiered Thinking vs. Adaptive Autonomy

- Reasoning Density: The "ARC-AGI-2" Breakthrough

- The Agentic Workflow: Automating Coding vs. Research vs. OS

- Inference Costs and the "Innovation Capacity" Metric

- The "Nano Banana" Logic: Visual Reasoning and Scale

- The Cybersecurity Threshold: Safeguards in a Post-Auth World

- Conclusion: Which Workflow Are You Automating?

The Architectural Breakdown: Tiered Thinking vs. Adaptive Autonomy

One of the most significant changes I’ve observed in Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 is how they handle computational effort. Gemini 3.1 Pro introduced a three-tier thinking system—Low, Medium, and High—which gives us explicit control over the tradeoff between latency and reasoning depth. This is a massive win for developers because it prevents us from burning expensive, deep-reasoning compute on simple subtasks while ensuring we don’t “underthink” the complex ones. In contrast, Claude 4.6 uses “Adaptive Thinking,” where the model itself decides how much reasoning effort to invest based on the task’s complexity. This feels more autonomous, but it can lead to higher latency on tasks where you might have preferred a quick, “System 1” response. When evaluating Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3, you have to decide if you want to be the one pulling the gears of the engine or if you want the car to shift for you.

The pricing of these tiers reflects a new reality in AI economics. Gemini 3.1 Pro has kept its pricing flat at $2 per million input tokens and $12 per million output tokens, which is remarkably competitive for a model scoring 77.1% on ARC-AGI-2. If you look at the landscape of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3, Google is clearly playing the volume game, offering frontier-level reasoning at a mid-tier price point. Anthropic’s Claude Opus 4.6 sits at the premium end, charging significantly more for its high-reasoning tasks, though its “Agent Teams” feature can often complete a complex workflow in a single pass that might take other models three or four iterations to get right. The efficiency of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 isn’t just about the sticker price; it’s about the “Inference Cost” per successful outcome, which I’ve found is often lower on the more “expensive” models for high-stakes tasks.

| Model Metric | Gemini 3.1 Pro | Claude 4.6 (Opus) | GPT-5.3 Codex |

| Input Price (per 1M) | $2.00 | $10.00 | $2.50 |

| Output Price (per 1M) | $12.00 | $37.50 | $10.00 |

| Context Window | 1,048,576 | 1,000,000 (Beta) | 128,000 (Spark) / 1M |

| Max Output Tokens | 65,536 | 128,000 | 128,000 |

| Reasoning System | 3-Tier (User Controlled) | Adaptive (Autonomous) | Continuous Steering |

When I dug into the logs of Gemini 3.1 Pro, I noticed that its actual output token consumption is 10-15% lower on average than the 3.0 version for identical tasks. This means that even with the same unit price, your total bill is likely to drop if you switch to 3.1 Pro. This is the kind of efficiency gain that defines the Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 battle in early 2026. We are seeing models that are not just smarter, but more concise. However, GPT-5.3-Codex takes a different approach to speed; it is roughly 25% faster than its predecessor, GPT-5.2-Codex, and it is specifically co-designed with NVIDIA GB200 hardware to reduce latency in agentic loops. For those of us building real-time OS automation or interactive coding tools, that 25% speed boost in Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 is the difference between a tool that feels like a colleague and one that feels like a laggy terminal.

Reasoning Density: The “ARC-AGI-2” Breakthrough

If you want to understand the soul of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3, you have to look at the ARC-AGI-2 results. This benchmark is the “gold standard” because it tests a model’s ability to solve entirely new logic patterns that weren’t in its training set. Gemini 3.1 Pro’s score of 77.1% is staggering—it’s more than double the performance of the previous generation. This tells me that Google has successfully moved beyond pattern matching and into the realm of true abstract deduction. In the context of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3, this makes Gemini the clear winner for algorithmic development and competitive programming where you are dealing with novel problems. I’ve found that when I’m stuck on a complex mathematical or logical puzzle that has no precedent on Stack Overflow, Gemini 3.1 Pro is the only one that consistently finds the “third way” out of the problem.

Claude 4.6 and GPT-5.3-Codex are no slouches in the reasoning department, but they prioritize different types of logic. Claude 4.6 leads on “Humanity’s Last Exam” (HLE), which tests multi-disciplinary reasoning across 2,000+ text-only questions in the sciences and humanities. This suggests that while Gemini might be the better “mathematician,” Claude is the better “philosopher” and “scientist”. When I am synthesizing 900-page research papers or looking for nuanced legal arguments, the “Reasoning Density” of Claude 4.6 feels more aligned with human professional judgment. The choice between Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 often comes down to this: do you need a model that can solve a logic puzzle (Gemini), or a model that can understand the structural implications of a complex human system (Claude)?

| Benchmark | Gemini 3.1 Pro | Claude 4.6 (Opus) | GPT-5.3 Codex |

| ARC-AGI-2 | 77.1% | 68.8% | N/A |

| GPQA Diamond | 94.3% | 91.3% | 92.4% (GPT-5.2) |

| SWE-Bench (Verified) | 54.2% (Pro) | 80.8% | 56.8% (Pro) |

| Terminal-Bench 2.0 | 68.5% | Data Pending | 77.3% |

| GDPval (Expert Tasks) | 1317 Elo | 1606 Elo | 1462 Elo (v5.2) |

The reasoning performance in Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 is also reflected in the models’ “thinking time.” Reasoning models generate internal tokens to “think” before they provide an answer, which adds to the Time to First Token (TTFT). In my tests, Gemini 3.1 Pro had a significant latency in “High” mode, sometimes taking over 30 seconds to start answering. This is the price we pay for that 77.1% ARC-AGI score. GPT-5.3-Codex, however, manages to balance this with its “Spark” variant, which offers 1,000 tokens-per-second interactivity for tasks where you need immediate feedback. This makes the Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 comparison a matter of pacing: Gemini for deep, asynchronous planning, and GPT for fast, synchronous pair-programming.

The Agentic Workflow: Automating Coding vs. Research vs. OS

The real “Super-Cycle” innovation of February 2026 is the emergence of agentic workflows that can span hours or even days. This is where the choice of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 becomes mission-critical. I’ve noticed that Claude 4.6 is currently the only model that consistently “passes” my internal engineering overhaul tests. When I asked all three models to plan a complex application overhaul, Claude generated a 16,000-word report that considered edge cases, startup sequences, and even proposed a “graceful handling of missing files” that I hadn’t even thought of. It felt like working with a real senior engineer. Gemini 3.1 Pro, despite its high reasoning score, provided a vague and imprecise plan in that specific scenario, showing that raw logic doesn’t always translate to professional architectural awareness.

This brings us to the “Agent Teams” feature in Claude 4.6. Instead of one agent working sequentially, Claude Code can now assemble teams to work on different parts of a codebase simultaneously. One agent reviews the auth code, another checks the DB queries, and a third audits the API endpoints. They communicate through a “Mailbox Protocol,” which I’ve found drastically reduces the “context rot” that usually kills long-running AI sessions. When comparing Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 for large-scale production code editing, Claude’s ability to “read the room” before changing a single line of code is its greatest asset. It consolidates shared logic rather than duplicating it, which saves me hours of cleanup on the backend.

However, if your workflow is more about “General Computer Use,” the comparison of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 swings toward OpenAI. GPT-5.3-Codex is explicitly designed to be an autonomous developer that engineer substantial parts of itself. It doubles its score on the OSWorld-Verified benchmark, reaching 64.7%. This benchmark involves the AI navigating a real virtual machine using a mouse, keyboard, and GUI apps. I’ve used GPT-5.3 to open LibreOffice, create a spreadsheet from raw logs, and save it as a PDF—all without me touching the mouse. This level of OS integration is the “frontier” of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3. While Claude and Gemini are confined to the browser or the IDE, GPT-5.3-Codex is living inside your operating system as a “persistent cockpit”.

Inference Costs and the “Innovation Capacity” Metric

As a practitioner, I’ve stopped measuring my team’s success by “velocity” and started measuring it by “Innovation Capacity”—our ability to ship code that doesn’t require five rounds of AI-assisted refactoring. In the context of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3, “Inference Cost” is no longer just about token prices; it’s about the cost of human oversight. If Gemini 3.1 Pro is 7x cheaper per token but requires three human interventions to fix a state-management bug that Claude 4.6 gets right the first time, then Claude is actually the cheaper model for that workflow. This is the “Reasoning Density” paradox that every professional needs to master in 2026.

I’ve found that the 1-million-token context window in Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 has changed how I think about “Data Debt”. Gemini 3.1 Pro’s ability to ingest 8.4 hours of audio or 900 pages of PDFs in one go is a massive advantage for research synthesis. You can load entire repositories, technical manuals, and meeting transcripts into one session without the model “forgetting” the initial instructions. This “long-horizon planning” is where Gemini’s reasoning density really shines. But you have to be careful with the “Verbosity” of Gemini 3.1 Pro; it is notoriously verbose, generating 57 million tokens in the same evaluation where other models generated 12 million. This can drive up your total inference cost in the Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 comparison if you aren’t using strict output limits.

| Modality Limit | Gemini 3.1 Pro |

| Images | Up to 900 per prompt |

| Audio | Up to 8.4 hours |

| Video | Up to 1 hour (no audio) |

| PDF Pages | Up to 900 pages |

| Output Ceiling | 65,536 tokens |

I’ve also been tracking the “Context Compaction” feature in Claude 4.6, which I think is a more elegant solution to the long-context problem than just expanding the window. As you approach the 1M token limit, the API automatically summarizes the earlier parts of the conversation, preserving the key structural information while freeing up space for new reasoning. This allows for “effectively infinite” conversations, which is crucial for multi-day projects like codebase migrations or deep research deep-dives. In the Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 debate, this compaction technology makes Claude feel like it has a “long-term memory” rather than just a massive “short-term buffer”.

The “Nano Banana” Logic: Visual Reasoning and Scale

Look, I’ve been there too—trying to get an AI to follow a complex visual brief only to have it “spray pixels” randomly. That changed with the February 2026 update. The “Nano Banana” prompts have become a shortcut for us to test how well Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 can handle “Scale Logic” and sequential reasoning. Gemini 3.1 Pro (Nano Banana Pro) is specifically built for tasks requiring high-fidelity output and complex scene construction. It doesn’t just generate an image; it reasons through the composition, lighting, and camera distance. For example, you can tell it to “remove the stone bust from the foreground to create a clean view,” and it predicts the pixels behind the object with surgical precision.

In my workflow, I use Gemini 3.1 Pro for “Product Consistency Carousels”. I can upload an image of a shoe and a jacket, and it will generate eight professional variations of a model wearing both in different urban settings, maintaining the product’s likeness perfectly. This “reasoning across multiple reference images” is a key differentiator in Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3. While GPT-5.3-Codex is busy building the backend, Gemini is building the “Editorial Fantasy” for the marketing spread. It can even generate website-ready, animated SVGs directly from text, which is a game-changer for front-end developers who need sharp, lightweight visuals that don’t bloat the site’s load time.

If you want to master the future of digital writing/investing, check out my blog at https://karanpowar.in where I document my journey of bridging the gap between AI speed and human editorial standards.

The visual reasoning in Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 also extends to “Technical Drawings and Blueprints”. I’ve used Gemini to turn a simple photo of a toy into a full assembly diagram, which requires the model to understand the internal logic of the object’s structure. This is “Multimodal Reasoning” in its purest form. OpenAI’s GPT-5.3-Codex is also moving into this space with its “OSWorld” vision, but it is currently more focused on GUI navigation than artistic or technical composition. For creators, the Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 comparison is clearly leaning toward Google when it comes to visual “Scale Logic”.

The Cybersecurity Threshold: Safeguards in a Post-Auth World

One thing that doesn’t get enough attention in the Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 discussion is the “Cybersecurity Frontier.” OpenAI has officially classified GPT-5.3-Codex as “High Capability” for cybersecurity tasks, the first model to reach this threshold under their Preparedness Framework. It has been specifically trained to identify software vulnerabilities and even helped debug its own training pipeline during development. This is both impressive and terrifying. It means that the model can now be used for “Autonomous Multitasking” across security repositories, identifying blockers and software failures with near-human precision.

Google’s Gemini 3.1 Pro has taken a more “precautionary” approach, remaining below the alert thresholds for misalignment and harmful manipulation in the latest FMF (Frontier Safety Framework) tests. However, they’ve introduced a separate “customtools” endpoint that is specifically hardened for users building with a mix of bash and external tools, ensuring that the model doesn’t “over-reach” its permissions during an agentic loop. When choosing between Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3, for enterprise-grade security automation, OpenAI’s “Trusted Access for Cyber” pilot program is the current gold standard, despite the “pedantic” and “staccato” writing style that many developers find frustrating in the Codex models.

The safety profiles of Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 are also defined by their “Thinking Tokens.” By making the reasoning process transparent—what Google calls “Thought Signatures”—we can now audit how the model reached a conclusion. If a model suggests a piece of code that could be a vulnerability, we can look back at the “System 2” logs to see if it was a logical oversight or an intentional instruction-following error. This transparency is what makes “Agentic AI” viable for enterprise deployment in 2026.

Conclusion: Which Workflow Are You Automating?

What I’ve realized after testing these models in the trenches is that the choice between Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 is no longer about “who is smartest.” It is about “which workflow” fits your specific stack. If you are a solo developer building complex apps from scratch, Claude 4.6 is your go-to because of its “Agent Teams” and superior architectural planning. If you are a DevOps engineer or a CI/CD specialist, GPT-5.3-Codex’s 77.3% Terminal-Bench score and its “Digital Coworker” cockpit will save you more time than any other tool on the market. And if you are a data scientist or a creative professional looking for the best “Value-per-Reasoning” and massive multimodal context, Gemini 3.1 Pro wins the Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3 battle for its sheer context volume and algorithmic depth.

The “Agentic Super-Cycle” of February 2026 isn’t just a marketing slogan; it’s a fundamental change in how we interact with compute. We are moving from “typing” to “supervising”. As roles shift, the models that provide the best “Reasoning Density”—that is, the most logical consistency for the lowest amount of human cleanup—will be the ones that dominate the market. Whether you choose Gemini 3.1 Pro vs Claude 4.6 vs GPT-5.3, the goal is the same: to 10x your output without losing the editorial soul of your work. We are at the precipice of the most significant shift since the 2012 deep learning reboot, and I invite you to join me in mastering these tools so we can build a future where AI is the engine, but human expertise is always the driver.

For more detailed technical specifications on these 2026 frontier models, consult the official(https://deepmind.google/models/model-cards/gemini-3-1-pro/

) to see how far the reasoning density has truly come.